ISSO 29992: Language-learning services — Requirements

Assessment planning

General: Assessment planning begins with needs analysis and resource planning and includes the creation of and assessment framework.

Nedds analysis: The assessment should align with the needs of the sponsor and the intended use.

Aspects of the needs analysis should include:

- Organizational goals and objectives.

- Knowledge, skills, and competencies to be assessed.

- Timing and relevance to the learning event.

- Types of decisions based on the assessment.

- Responsible parties and target population.

- Environmental factors and constraints.

- Duration of assessment validity.Stakeholder consultation is essential, and the analysis must be documented for future revisions.

Resource Planning:

A resource plan must be developed to cover:-Assessment selection, design, and development.-Assessment administration and security.-Review of the assessment and ethical considerations.

Assessment Framework: Developed in coordination with the sponsor and stakeholders.Based on the needs analysis, it answers questions about:- The scope, goals, and consequences of the assessment. -The relationship between assessment goals, methods, scores, and their interpretation. - How scores are reported.Additional elements include: Conditions for assessment development and implementation. Timelines, costs, and assessment environment. Prerequisites, standards, tools, and methods. Quality assurance, qualifications, maintenance plans, and rules of conduct. The framework must be approved by the assessment sponsor.

Assessment development

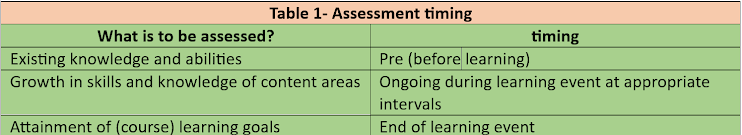

Timing of the Assessment:

- The design phase should specify when assessments occur:

Qualifications for Assessment Developers

Assessment developers are responsible for planning, developing, and ensuring the quality of assessments. They must be trained, qualified, and free from conflicts of interest. Key qualifications and responsibilities include:

- Expertise: Knowledge and experience in the relevant field.

- Training: Skills in data analysis, feedback, documentation, and validation of assessment processes.

- Methodology: Familiarity with various assessment methods and tools.

- Documentation: Clearly defined and documented roles and responsibilities, whether internal or external to the organization.

Means of assessment

The means of assessment should align with the goals identified through the needs analysis. The chosen methods depend on what is being assessed.

Assessment specifications The assessment specifications document outlines the design, content, scoring, reporting, and intended

use of the assessment. It includes details about the physical setting, time allocation, personnel qualifications, required materials, technological needs (hardware, software, security), and the retention period for assessment reports.

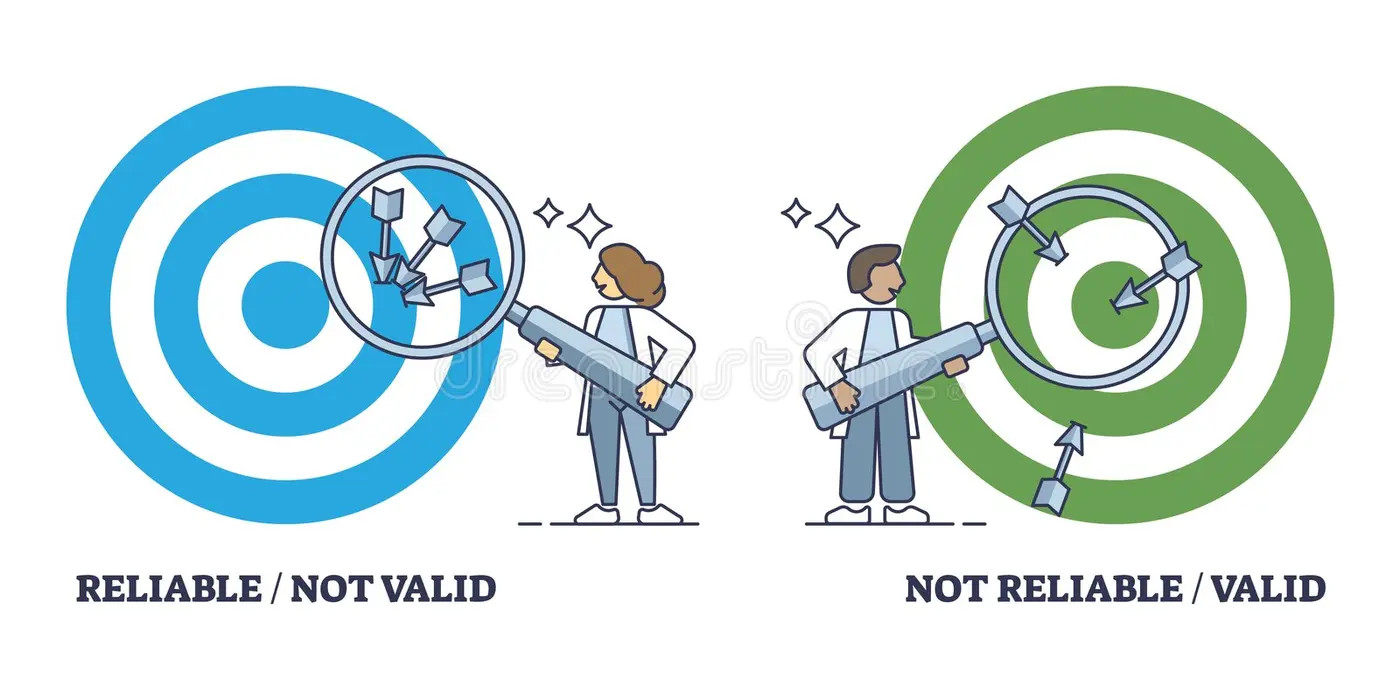

Objectivity, reliability and validity

Objectivity: Assessments must use agreed-upon criteria to ensure consistent scoring across scorers.

Reliability: Scores should be consistent across administrations, locations, and scorers. Retakes within a short period should yield similar results.

Validity: Assessments must measure their intended purpose and reflect real-world or similar activities. Evidence supporting validity should be documented.

Scoring procedures

Hand Scoring

Use a defined protocol with clear, unambiguous criteria.

Live Scoring

Follow a predefined scoring protocol.

Machine Scoring

Specify technical requirements for scoring technology.

Periodically verify machine accuracy with hand scoring.

Reporting of assessment results

- Ensure secure transport and storage of assessment materials.

- Define procedures for recording scores at all levels (items, sections, full assessments).

- Provide scorers with answer keys, guides, and item lists, including both required and prohibited items.

Reporting of assessment results

Time Frame

- Establish a clear timeline for reporting results to the requesting entity.

Information

- Reports should be clear, concise, descriptive, and usable by both the requesting entity and examinees.

Score Expiration

- Clearly specify and justify score expiration policies.

Arbitration, Grievances, and Appeals

- Publish defined policies outlining the appeal process, including conditions for requests, roles, responsibilities, and timelines.

Technical Documentation

- Maintain technical documentation.

Administration of assesssments

Guidelines

Create clear guidelines for how the assessment will be run, covering things like delivery, proctoring,

scoring, procedures, materials, reporting results, and handling complaints, appeals, reassessments, and record-keeping. Make delivery as consistent as possible, with reasonable adjustments to ensure fairness for everyone taking the test.

Assessment Security Plan

Create a security plan addressing all phases of the assessment process, including:

- Protecting personal data.

- Defining roles and responsibilities of security staff.

- Listing security documents, like non-disclosure agreements and policy guides.

- Training procedures for security staff.

- Requirements for physical and digital security.

- Methods for checking compliance with security rules.

- A plan for responding to security breaches.

- Measures to prevent and handle improper behavior.

Protocors

Proctor Qualifications

Proctors should avoid conflicts of interest, such as being related to an examinee or their instructor.

Proctor Responsibilities

- Maintain the integrity of the assessment environment.

- Verify the identity of examinees.

- Communicate with assessment administrators.

- Ensure the assessment follows set procedures

Qualifications of Scorers/Raters

Scorers/raters, who may help develop and administer the assessment, should:

- Be trained and qualified to assess the examinee's competence based on agreed criteria or standards.

- Have relevant knowledge and experience in the field.

Maintenance and Revision

Assessment Maintenance Plan:

- Ensures the assessment remains valid and reliable.

- Includes:

- A list of documents related to reliability and validity.

- Specifications for evaluating assessment performance.

- Processes for reviewing items, conducting statistical analyses, and retraining scorers.

- A schedule for these processes throughout the assessment's life cycle.

- Metrics to determine item/assessment life cycle.

- Recommendations for handling maintenance review results.

- Resources needed for maintenance (money, contracts, personnel).

Assessment Revision Plan:

- Periodic reviews based on the maintenance plan.

- Revisions follow specific guidelines:

- When changes are allowed or mandatory.

- Mechanisms for refreshing items (replacing items or forms).

- Circumstances under which cut scores can change.

- Limits on changes in the assessor pool.

- Strategies to validate new items.

- Resources required for revisions.

Fairness

Formal Agreement :

- A written agreement prevents misunderstandings and outlines:

- Examination fees

- Rights and duties of participants

- Prerequisites for taking the assessment

- Description of the assessment

- Personal data protection

- Timeframe for reporting results

- A written agreement prevents misunderstandings and outlines:

Non-discrimination :

- All examinees should have an equal opportunity to take the assessment, regardless of age, gender, nationality, religion, or disabilities (visual, auditory, physical).

- Reasonable accommodations should be made for examinees with disabilities, such as extra time or alternative assessments.

- Consider access based on the examinee's location.

Neutrality (8.4):

- The assessment process must be unbiased, ensuring neutrality from assessors, examinees, and others.

- Ideally, assessors should not be the examinee's instructor to avoid conflicts of interest.

Rules of Conduct

- Clear rules should ensure fair conditions, including:

- Identification requirements

- Allowed materials

- Timing and departure procedures

- Assessment sponsors must ensure these rules are followed.

- Clear rules should ensure fair conditions, including:

Information Provided to Examinees:

- Key information should be shared with examinees, including:

- Rules of conduct

- Proctoring procedures

- Security requirements

- Abilities the assessment measures

- Level of abilities the assessment targets

- Contact details for inquiries.

Ethics

Responsibilities

All parties involved in assessments have ethical duties.Responsible for identifying, communicating, and documenting any local responsibilities or obligations.Ethical implications of actions must be considered throughout the assessment process.

Assessment Information :Information about the assessment must be truthful and accurate.It is unethical to misrepresent assessment details to any involved parties.

Information Security

Assessment developers and users must ensure:

Confidentiality of collected data.

Protection and security of data used in assessments.

Secure distribution, retrieval, and transmission of information.

Proper structure for information management systems, along with user manuals.

Ongoing training for those managing the information system.